The Main Thread

Principles over hacks. Systems over shortcuts.

LLM

+3

Mar 9, 2026

•

14 min read

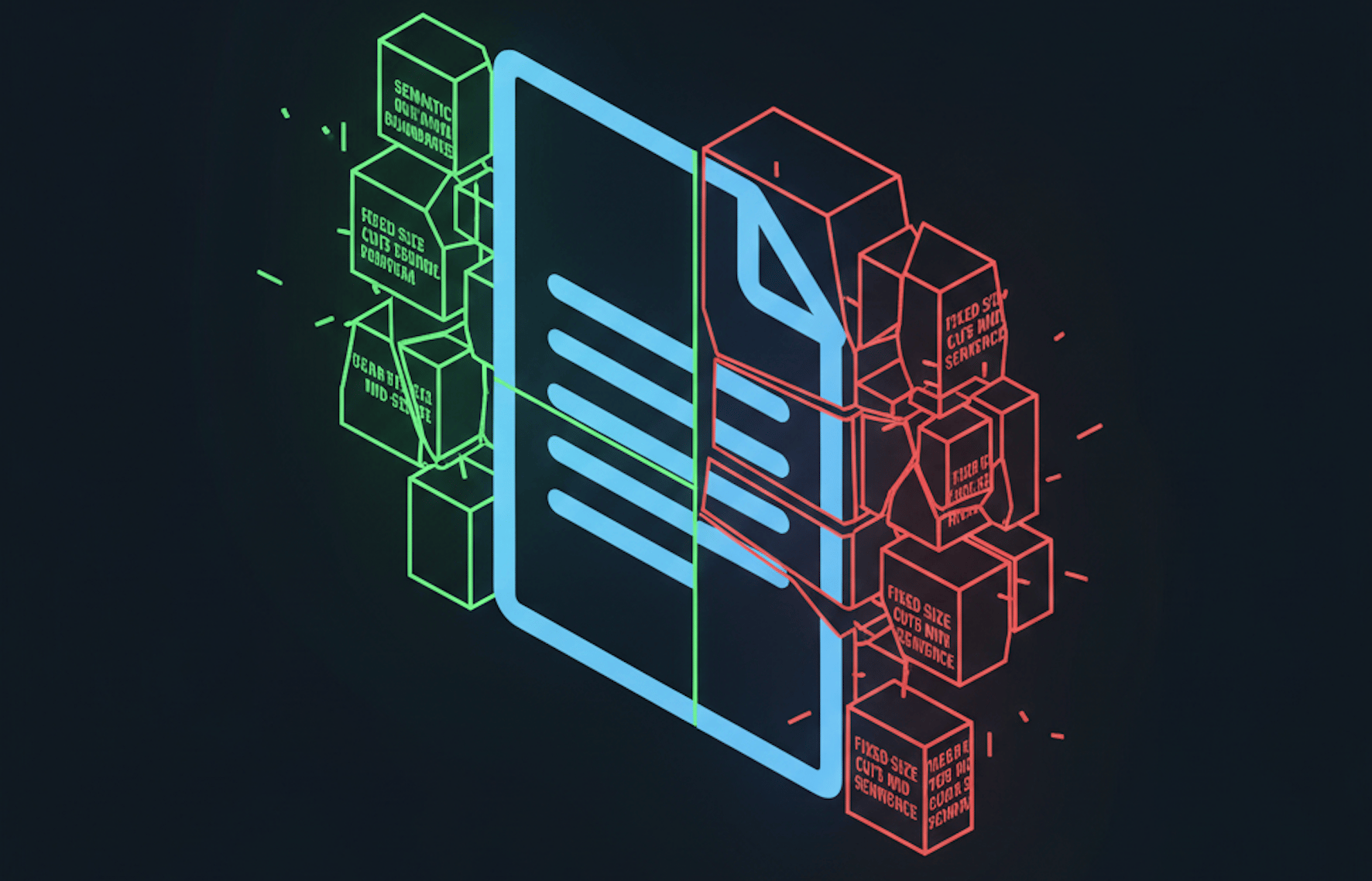

Context Window Management: The Hidden Engineering Problem

128K tokens doesn’t mean you should use 128K tokens

LLM

+2

Feb 23, 2026

•

6 min read

Don't Break Production With a Retry Loop

Your LLM application will hit rate limits. Here's how to handle it gracefully with exponential backoff, token buckets, and priority queuing.