Hey everyone, welcome to the twenty-fourth issue of The Main Thread.

Continuing our commitment to understand AI engineering deeply by peeling the layers and discussing patterns around it, in this issue, we will be discussing Context Window Management and how to avoid paying more for worse results.

Introduction

Let’s say your LLM provider support 128K context window. It doesn’t mean that you should use 128K tokens. I know this sounds like I am telling you not to use the thing you are paying for but nobody mentions: longer context doesn’t mean better results. Often, it means mediocre or even worse results at higher costs.

I see engineers on X or Reddit or even some AI companies boast how they stuff their entire codebase into a prompt. What they fail to mention is that their model “forgot“ the critical instructions buried in the middle.

These teams often shoot themselves in the foot with production bills because they thought “more context = more accurate“. I have personally worked with RAG systems that retrieved 50 relevant chunks but performed worse than systems that retrieved only 5.

Context window management is one of those problems that seem trivial until we are in production. Then it becomes the difference between an AI feature that delights users versus the one that frustrates them.

Let’s fix that.

The “Lost In The Middle“ Problem

Before we talk solutions, we need to understand why that matters.

In 2023, researchers at Stanford published a paper that should have been a wake-up call for every AI engineer. The task they gave language models was simple: find a specific task buried somewhere in the long context. The model performed well when the fact was at the beginning and great when it was at the end. But accuracy dropped by 20-30% when the fact was in the middle.

This is “lost in the middle“ problem, and it affects every major LLM.

Position in context: Beginning Middle End

Recall accuracy: ~95% ~65% ~90%This is because attention mechanisms create U-shaped recall curves which are strong at the edges and weak in the center. This is why language models don’t “see” all tokens equally.

What does this mean practically? If we are stuffing 100K tokens into our prompt, there’s a high chance that model might ignore 30-40% of them. Therefore, we end up paying for tokens that are not contributing to the answer.

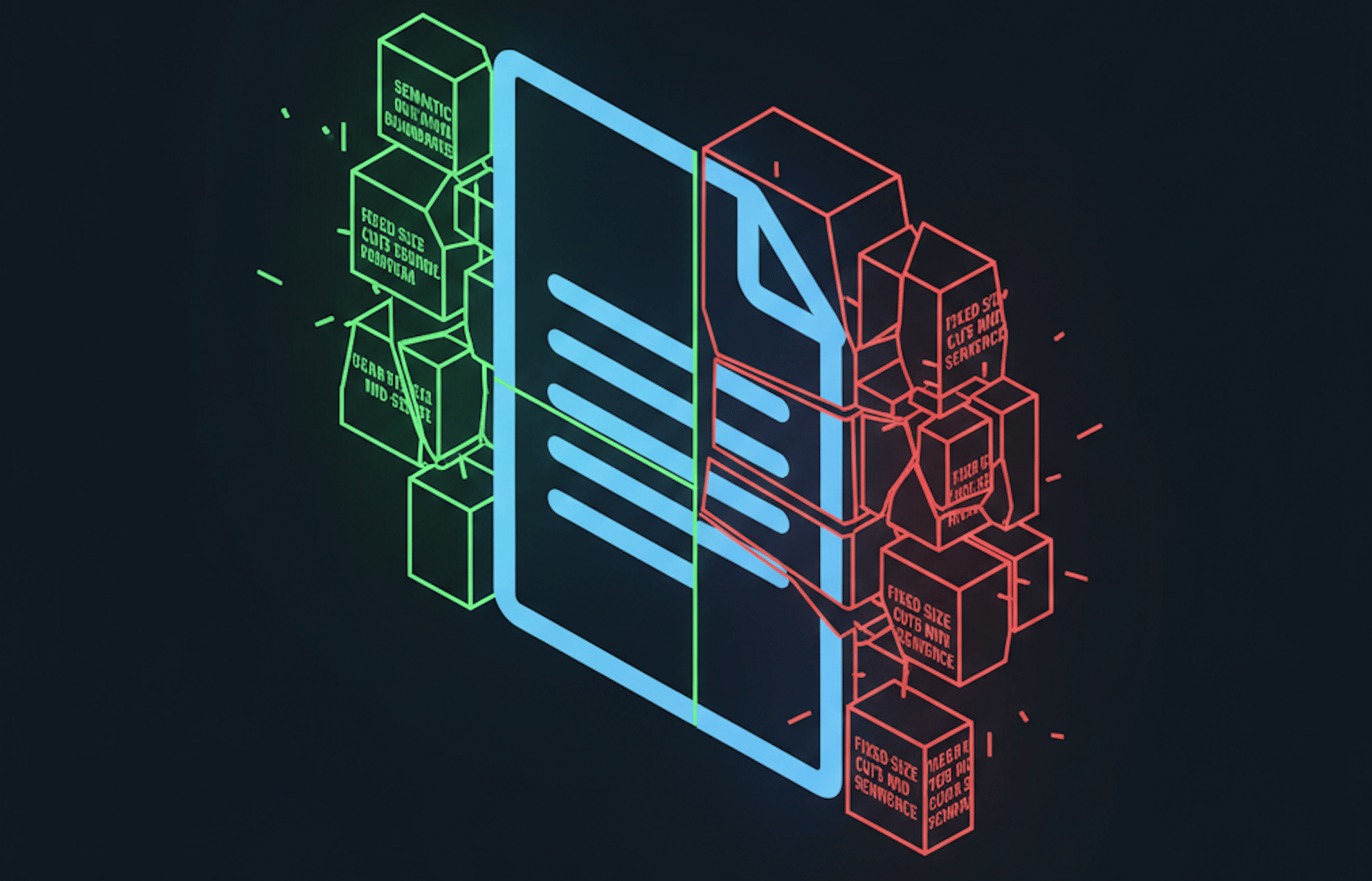

Three Chunking Strategies

Most AI applications need to break documents into chunks before embedding or including them in prompts. What dramatically affects retrieval quality is how we chunk.

1. Fixed-Size Chunking

It is the simplest approach where we split text into chunks of N tokens (or characters) with optional overlap.

def fixed_size_chunk(text: str, chunk_size: int = 512, overlap: int = 50) -> list[str]:

"""

Split text into fixed-size chunks with overlap.

Args:

text: Input text to chunk

chunk_size: Target size per chunk (in characters)

overlap: Characters to overlap between chunks

Returns:

List of text chunks

"""

chunks = []

start = 0

while start < len(text):

end = start + chunk_size

chunk = text[start:end]

# Try to break at sentence boundary

if end < len(text):

last_period = chunk.rfind('.')

if last_period > chunk_size * 0.8: # Don't break too early

end = start + last_period + 1

chunk = text[start:end]

chunks.append(chunk.strip())

start = end - overlap

return [c for c in chunks if c] # Filter empty chunksPros:

Simple

Predictable chunk sizes

Easy to estimate token counts

Cons

Breaks semantic units arbitrarily

A paragraph about one concept might be split across two chunks

Embedding of each chunk captures incomplete ideas

When to use?

Quick prototyping

Uniform content like logs or structured data

2. Semantic Chunking

In this technique, we split at natural boundaries of language such as paragraphs, sections, or sentences. This strategy respects the document structure.

import re

def semantic_chunk(text: str, max_chunk_size: int = 1000) -> list[str]:

"""

Split text at semantic boundaries (paragraphs, sections).

Args:

text: Input text to chunk

max_chunk_size: Maximum characters per chunk

Returns:

List of semantically coherent chunks

"""

# Split by double newlines (paragraphs) or headers

sections = re.split(r'\n\n+|(?=^#{1,3}\s)', text, flags=re.MULTILINE)

sections = [s.strip() for s in sections if s.strip()]

chunks = []

current_chunk = ""

for section in sections:

# If section alone exceeds max, split by sentences

if len(section) > max_chunk_size:

sentences = re.split(r'(?<=[.!?])\s+', section)

for sentence in sentences:

if len(current_chunk) + len(sentence) > max_chunk_size:

if current_chunk:

chunks.append(current_chunk.strip())

current_chunk = sentence

else:

current_chunk += " " + sentence

# Otherwise, accumulate sections

elif len(current_chunk) + len(section) > max_chunk_size:

chunks.append(current_chunk.strip())

current_chunk = section

else:

current_chunk += "\n\n" + section

if current_chunk.strip():

chunks.append(current_chunk.strip())

return chunksPros

Preserves meaning within chunks

Better embeddings because each chunk represents a complete idea

Cons

Variable chunk sizes

Some chunks might be tiny (a single heading), others large (a long paragraph)

When to use?

Documentation, articles or any prose content where semantic coherence matters

3. Recursive Chunking

This is a sophisticated approach where we try to split at large boundaries first (sections), then medium (paragraphs), then small (sentences), until chunks are the right size.

def recursive_chunk(

text: str,

chunk_size: int = 1000,

separators: list[str] = None

) -> list[str]:

"""

Recursively split text, trying larger separators first.

Args:

text: Input text to chunk

chunk_size: Target maximum chunk size

separators: Ordered list of separators to try

Returns:

List of chunks

"""

if separators is None:

separators = [

"\n## ", # Markdown h2

"\n### ", # Markdown h3

"\n\n", # Paragraphs

"\n", # Lines

". ", # Sentences

" ", # Words

]

# Base case: text is small enough

if len(text) <= chunk_size:

return [text.strip()] if text.strip() else []

# Try each separator

for separator in separators:

if separator in text:

parts = text.split(separator)

chunks = []

current = ""

for part in parts:

candidate = current + separator + part if current else part

if len(candidate) <= chunk_size:

current = candidate

else:

if current:

chunks.append(current.strip())

# Recursively chunk the part if it's too large

if len(part) > chunk_size:

chunks.extend(recursive_chunk(part, chunk_size, separators[separators.index(separator)+1:]))

else:

current = part

if current.strip():

chunks.append(current.strip())

return chunks

# No separator worked, force split

return [text[i:i+chunk_size] for i in range(0, len(text), chunk_size)]Pros

Best of both worlds: respects semantics while maintaining chunk size bounds

Adapts to document structure automatically

Cons

More complex

Behavior depends on separator ordering

When to use?

Production RAG systems where retrieval quality matters

If you like what you have read so far, consider subscribing to this newsletter.

Context Compression: Fit More Signal, Less Noise

Compression strategies help when we need information from a large document but can’t fit it all in context.

1. Extractive Summarization

Here we pull out key sentences rather than the full document.

from sentence_transformers import SentenceTransformer

import numpy as np

def extract_key_sentences(

text: str,

query: str,

num_sentences: int = 5,

model_name: str = "all-MiniLM-L6-v2"

) -> str:

"""

Extract sentences most relevant to the query.

Args:

text: Source document

query: What we're looking for

num_sentences: How many sentences to extract

Returns:

Extracted key sentences as a single string

"""

model = SentenceTransformer(model_name)

# Split into sentences

sentences = [s.strip() for s in text.split('.') if s.strip()]

if len(sentences) <= num_sentences:

return text

# Embed query and sentences

query_embedding = model.encode(query)

sentence_embeddings = model.encode(sentences)

# Score by similarity to query

similarities = np.dot(sentence_embeddings, query_embedding)

# Get top sentences, preserving original order

top_indices = np.argsort(similarities)[-num_sentences:]

top_indices = sorted(top_indices) # Preserve document order

extracted = '. '.join([sentences[i] for i in top_indices])

return extracted + '.'We should use this technique when we need to reference a document but only some parts of it are relevant to the current query.

2. LLM-Based Summarization

This technique has two steps: first, we use a cheaper model to summarize, then feed the summary to our main model.

def hierarchical_summarize(

chunks: list[str],

client,

max_summary_tokens: int = 500

) -> str:

"""

Summarize chunks hierarchically, then combine.

Args:

chunks: List of text chunks to summarize

client: OpenAI client

max_summary_tokens: Target tokens per summary

Returns:

Final combined summary

"""

# First pass: summarize each chunk

chunk_summaries = []

for chunk in chunks:

response = client.chat.completions.create(

model="gpt-4o-mini", # Cheap model for summarization

messages=[{

"role": "user",

"content": f"Summarize this in 2-3 sentences:\n\n{chunk}"

}],

max_tokens=150

)

chunk_summaries.append(response.choices[0].message.content)

# Second pass: combine summaries

combined = "\n".join(chunk_summaries)

if len(combined) > max_summary_tokens * 4: # Rough char estimate

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{

"role": "user",

"content": f"Combine these summaries into one coherent summary:\n\n{combined}"

}],

max_tokens=max_summary_tokens

)

return response.choices[0].message.content

return combinedWe should use this technique for processing long documents (research papers, books) where we need the gist and not every detail.

Sliding Window for Conversations

Conversations grow unbounded. A 50-message thread can easily exceed the context limits. To tackle this problem, we use sliding window strategies that keep recent context while managing length.

def sliding_window_messages(

messages: list[dict],

max_tokens: int = 4000,

tokens_per_message: int = 100 # Rough estimate

) -> list[dict]:

"""

Keep recent messages within token budget.

Always preserves:

- System message (first)

- Current user message (last)

- As much recent history as fits

Args:

messages: Full conversation history

max_tokens: Token budget for context

tokens_per_message: Estimated tokens per message

Returns:

Truncated message list

"""

if not messages:

return messages

system_message = None

if messages[0]["role"] == "system":

system_message = messages[0]

messages = messages[1:]

current_message = messages[-1] if messages else None

history = messages[:-1] if len(messages) > 1 else []

# Calculate budget

reserved = tokens_per_message * 2 # System + current

history_budget = max_tokens - reserved

# Take recent messages that fit

selected_history = []

tokens_used = 0

for msg in reversed(history):

msg_tokens = len(msg.get("content", "")) // 4 # Rough token estimate

if tokens_used + msg_tokens > history_budget:

break

selected_history.insert(0, msg)

tokens_used += msg_tokens

# Reconstruct

result = []

if system_message:

result.append(system_message)

result.extend(selected_history)

if current_message:

result.append(current_message)

return resultThere’s smarter way to do it. Instead of dropping old messages, we summarize them:

def sliding_window_with_summary(

messages: list[dict],

client,

max_tokens: int = 4000,

summary_threshold: int = 20

) -> list[dict]:

"""

Summarize old messages instead of dropping them.

Args:

messages: Full conversation history

client: OpenAI client

max_tokens: Token budget

summary_threshold: Messages before we start summarizing

Returns:

Messages with older content summarized

"""

if len(messages) < summary_threshold:

return messages

system_message = None

if messages[0]["role"] == "system":

system_message = messages[0]

messages = messages[1:]

# Split: old messages to summarize, recent to keep

split_point = len(messages) - 10 # Keep last 10 messages

old_messages = messages[:split_point]

recent_messages = messages[split_point:]

# Summarize old conversation

old_text = "\n".join([

f"{m['role']}: {m['content']}"

for m in old_messages

])

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{

"role": "user",

"content": f"Summarize this conversation history in 2-3 sentences, focusing on key decisions and context:\n\n{old_text}"

}],

max_tokens=200

)

summary = response.choices[0].message.content

# Reconstruct with summary

result = []

if system_message:

result.append(system_message)

result.append({

"role": "system",

"content": f"Previous conversation summary: {summary}"

})

result.extend(recent_messages)

return resultThis works because recent messages have immediate relevance whereas old messages provide background context. Summarizing them preserves the background while freeing token budget for recent detail.

Priority-Based Context Selection

Not all context is equal. Some information is critical, some is nice-to-have, some is noise. Therefore, assigning priority to context help us in optimizing our token budget.

from dataclasses import dataclass

from enum import Enum

class Priority(Enum):

CRITICAL = 1 # Must include: user instructions, current query

HIGH = 2 # Important: relevant retrieved documents

MEDIUM = 3 # Helpful: related context, examples

LOW = 4 # Optional: background, nice-to-have

@dataclass

class ContextItem:

content: str

priority: Priority

tokens: int

def build_context(

items: list[ContextItem],

max_tokens: int

) -> str:

"""

Build context by priority, fitting within token budget.

Args:

items: Context items with priorities

max_tokens: Available token budget

Returns:

Combined context string

"""

# Sort by priority

sorted_items = sorted(items, key=lambda x: x.priority.value)

selected = []

tokens_used = 0

for item in sorted_items:

if tokens_used + item.tokens <= max_tokens:

selected.append(item)

tokens_used += item.tokens

elif item.priority == Priority.CRITICAL:

# Always include critical items, even if over budget

selected.append(item)

tokens_used += item.tokens

# Sort selected items back to logical order (or by some other criteria)

return "\n\n---\n\n".join([item.content for item in selected])

####### Usage #######

context_items = [

ContextItem(

content=f"User query: {user_query}",

priority=Priority.CRITICAL,

tokens=50

),

ContextItem(

content=system_prompt,

priority=Priority.CRITICAL,

tokens=200

),

ContextItem(

content=retrieved_doc_1,

priority=Priority.HIGH,

tokens=500

),

ContextItem(

content=retrieved_doc_2,

priority=Priority.HIGH,

tokens=500

),

ContextItem(

content=user_preferences,

priority=Priority.MEDIUM,

tokens=100

),

ContextItem(

content=background_info,

priority=Priority.LOW,

tokens=1000

),

]

final_context = build_context(context_items, max_tokens=2000)This matters because in RAG systems, we might receive 20 relevant documents but only 5 fit in context. Priority ensures we include the most relevant 5, not just the first 5.

Cost Reality

Context is about quality, yes, but it is also about bill. Let’s talk money. Following are the standard pricing of GPT-5.2 and Claude Opus 4.6:

Model | Input Cost (per 1M tokens) | Output Cost (per 1M tokens) |

|---|---|---|

GPT-5.2 | $1.75 | $14.00 |

Claude 4.6 Opus | $25.00 | $25.00 |

Now, do the math:

# Scenario: RAG application

# 1000 queries per day

# Each query stuffs 50K tokens of context

daily_input_tokens = 1000 * 50_000 # 50M tokens

# GPT-5.2

daily_input_cost_gpt5_2 = (50 * 1.75) # $87.50/day for input alone

monthly_cost_gpt5_2 = daily_input_cost_gpt5_2 * 30 # $2,625/month

# Claude Opus 4.6

daily_input_cost_claude = (50 * 5.00) # $250/day for input alone

monthly_cost_claude = daily_input_cost_claude * 30 # $7,500/monthBut what if we optimized for 10K tokens per query instead:

optimized_daily = 1000 * 10_000 # 10M tokens

# GPT-5.2

optimized_cost_gpt5_2 = (10 * 1.75) # $17.50/day

optimized_monthly_gpt5_2 = optimized_cost_gpt5_2 * 30 # $525

# Claude Opus 4.6

optimized_cost_claude = (10 * 5.00) # $50/day

optimized_monthly_claude = optimized_cost_claude * 30 # $1,500

monthly_savings_gpt5_2 = (87.50 - 17.50) * 30 # $2,100/month saved

monthly_savings_claude = (250 - 50) * 30 # $6,000/month savedThis is a 5x reduction in input costs. But here is the counterintuitive part: smaller contexts often perform better. Less noise, more signals. The model attends to what matters instead of drowning in irrelevant tokens.

Production Checklist

Before deploying any LLM feature, always audit your context strategy:

Chunking

✔ Using semantic or recursive chunking (not just fixed-size)

✔ Chunk size matches our retrieval/embedding model's sweet spot

✔ Overlap prevents losing information at boundaries

Retrieval

✔ Retrieving enough chunks for coverage, not so many we hit "lost in the middle"

✔ Priority ranking retrieved chunks by relevance

✔ Re-ranking before including in context (not just vector similarity)

Conversations

✔ Sliding window or summarization for long threads

✔ System prompts preserved across truncation

✔ Critical instructions at beginning AND end of context

Cost

✔ Monitoring tokens per request

✔ Alerting on anomalous context sizes

✔ Caching repeated context (embeddings, summaries)

Quality

✔ Testing with target fact in beginning, middle, and end of context

✔ Measuring whether longer context actually improves answers

✔ A/B testing context strategies

Takeaway

Context managements is a leverage point. If we get it wrong, we pay for tokens that the model ignores. If we get it right, we deliver better answers at lower cost.

The context windows of LLMs are a ceiling and should not be treated as a target. The best AI apps use context judiciously: exactly what’s needed, place where the model can effectively use it, structure them in a way to indicate model what to priortize.

Our context strategy should be a part of our architecture. We must treat it that way.

What context management challenge are you facing? I am especially curious about conversation memory patterns: how are you handling long threads in production?

Reply and tell me. I read every response.

If this helped, share it with your team. And if you want more practical AI engineering insights, subscribe to The Main Thread: one deep dive per week, no fluff.

I’d love to connect with you on X @anirudhology.

Namaste!

— Anirudh